QA Pipeline Incident Report

Executive Summary

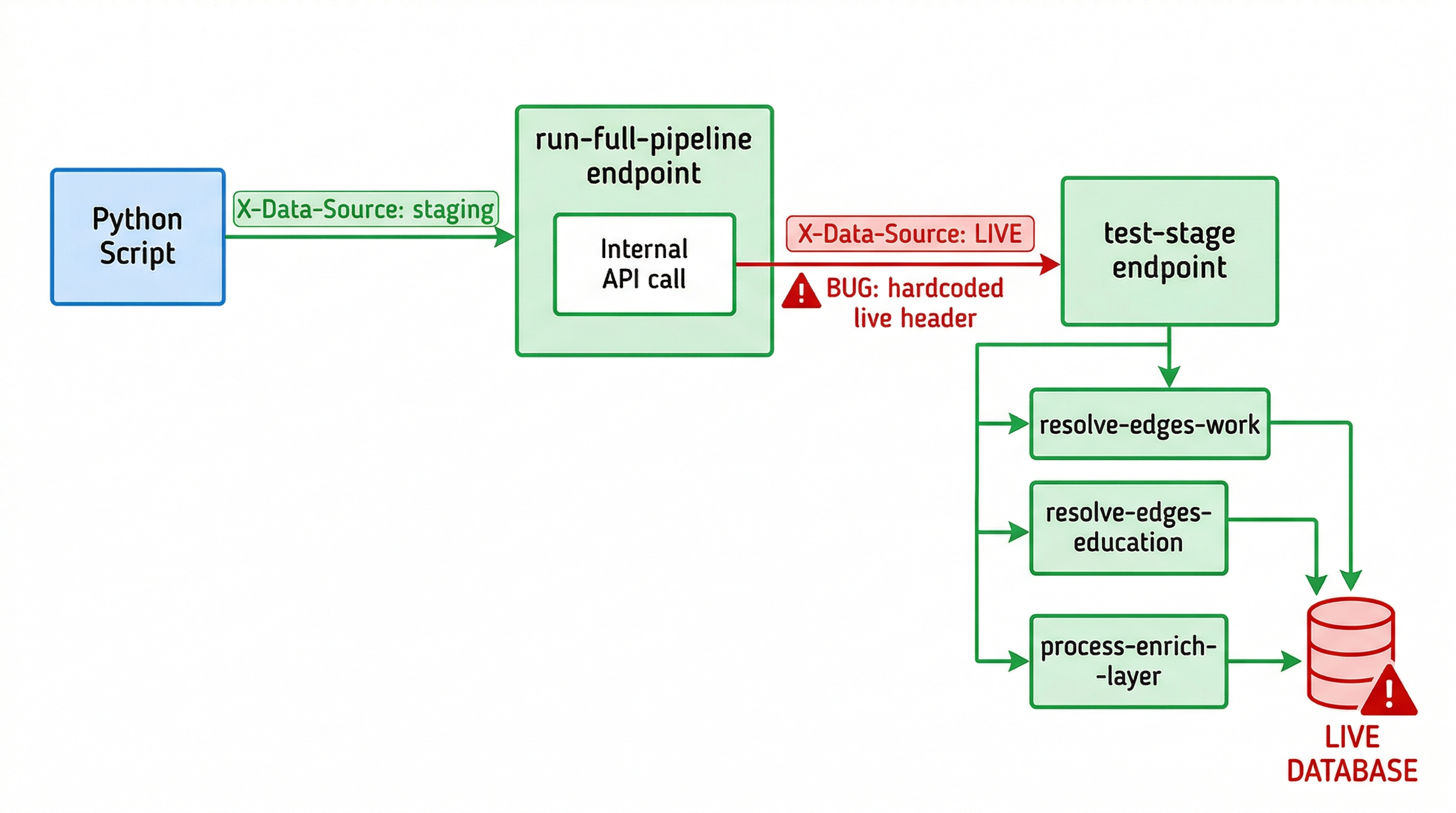

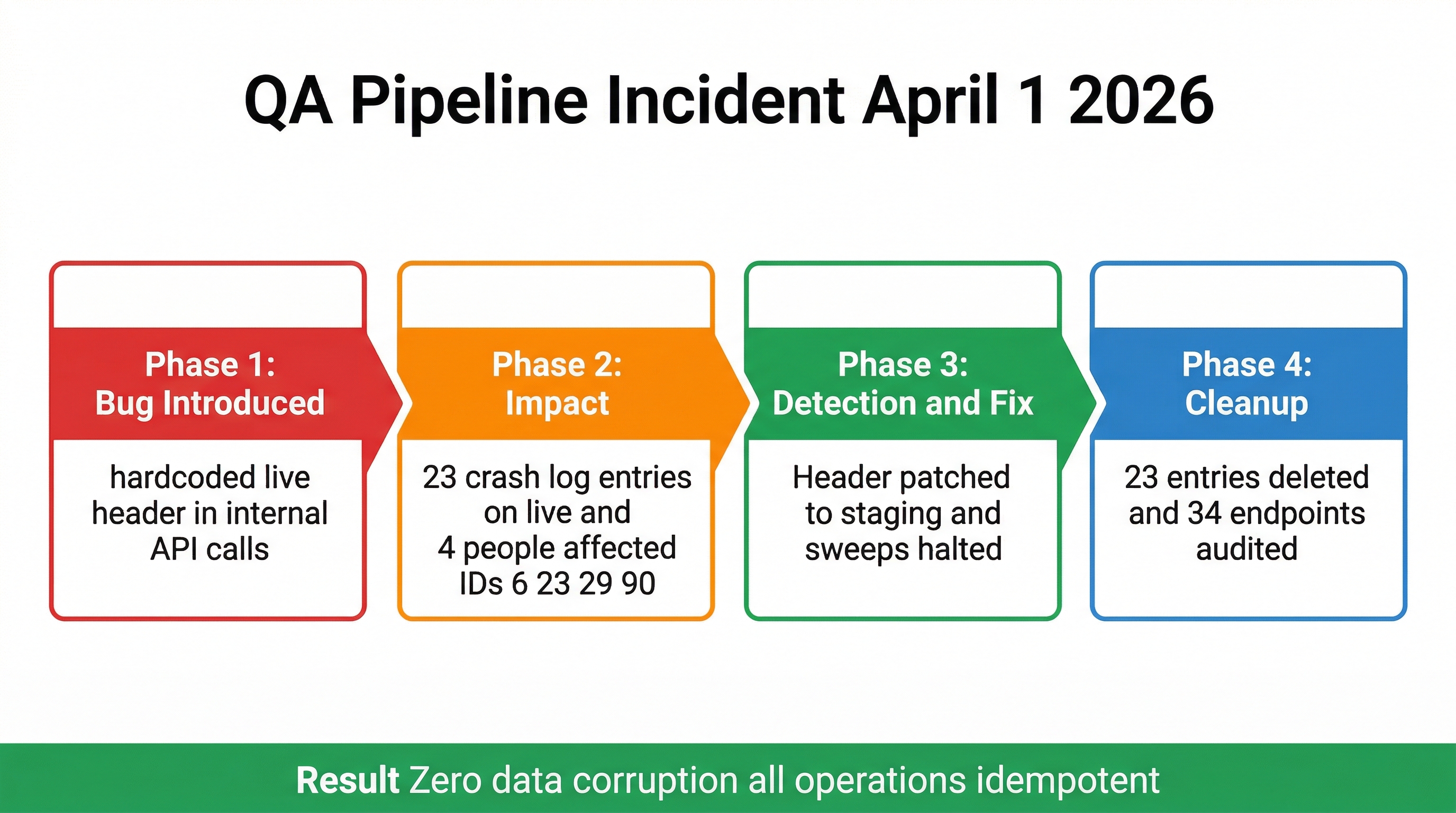

During QA enrichment testing on staging, an internal API call within the qa/run-full-pipeline endpoint had a hardcoded X-Data-Source: live header. This caused enrichment stages 1–8 (out of 9) to execute against the live database instead of staging.

The bug was detected, both running sweeps were immediately killed, the header was patched to staging, and all 23 QA-caused crash log entries on live were deleted. No data was corrupted or lost.

What Happened

The QA system has a multi-layer architecture:

- Python sweep scripts call

qa/run-full-pipelinewithX-Data-Source: staging run-full-pipelineinternally callsqa/test-stagefor each of 8 enrichment stages viaapi.requesttest-stagedispatches to individual QA functions viafunction.run

The bug was in step 2. The api.request inside run-full-pipeline had a hardcoded header:

// Inside qa/run-full-pipeline endpoint

api.request {

url = $base_url

method = "POST"

params = $body

headers = []

|push:"Content-Type: application/json"

|push:"X-Data-Source: live" // ← BUG: should be "staging"

|push:"X-Branch: v1"

timeout = 300

}

This meant that while the outer endpoint's own queries (skip-check, pass logging) ran on staging correctly, the actual enrichment work for stages 1–8 ran against live.

Incident Timeline

qa/resolve-edges-work function name and null master_person_id. Investigation revealed the hardcoded live header.X-Data-Source: live to X-Data-Source: staging. Deployed immediately.Impact Assessment

What ran on live

Stages 1–8 of the person enrichment pipeline ran against live data for people who had not yet been processed. These stages are:

| Stage | Function | What it does | Destructive? |

|---|---|---|---|

| 1 | process-enrich-layer | Fills missing names, bios, skills, avatars | No — additive |

| 2 | resolve-edges-education | Links education records to institutions | No — additive |

| 3 | resolve-edges-work | Links work records to companies, sets best role | No — additive |

| 4 | resolve-edges-certifications | Links certifications | No — additive |

| 5 | resolve-edges-projects | Links projects/publications | No — additive |

| 6 | resolve-edges-honor | Links honors/awards | No — additive |

| 7 | resolve-edges-volunteering | Links volunteering records | No — additive |

| 8 | complete-person-enrich | Finalizes enrichment, marks complete | No — additive |

All enrichment operations are idempotent. They check for existing records before inserting, fill missing links, and update timestamps. Running them on already-enriched live data produces the same result — no duplicates, no deletions, no corruption.

People who crashed (4 total)

Only 4 live people experienced crashes in resolve-edges-work (stage 3). The crash occurred in the "best current role" selection logic and prevented the write from completing — meaning no data was changed for these people.

| Person ID | Crash count | Function | Data changed? |

|---|---|---|---|

| 6 | 4 entries | qa/resolve-edges-work + mvp/work/choose-best-current-role | No — crashed before write |

| 23 | 10 entries | qa/resolve-edges-work + mvp/work/choose-best-current-role | No — crashed before write |

| 29 | 4 entries | qa/resolve-edges-work + mvp/work/choose-best-current-role | No — crashed before write |

| 90 | 6 entries | qa/resolve-edges-work + mvp/work/choose-best-current-role | No — crashed before write |

People who passed (~114)

Approximately 114 live people had their enrichment stages re-run successfully. Because the enrichment functions are additive and idempotent:

- No duplicate records were created (add functions check for existing)

- No data was deleted

- Timestamps (

updated_at) were refreshed on some records - Missing edge links may have been filled (this is the normal enrichment behavior)

Company sweep

The company sweep (qa/test-company) was not affected. It uses function.run internally (inherits the caller's datasource), not api.request with hardcoded headers. All company processing ran on staging correctly.

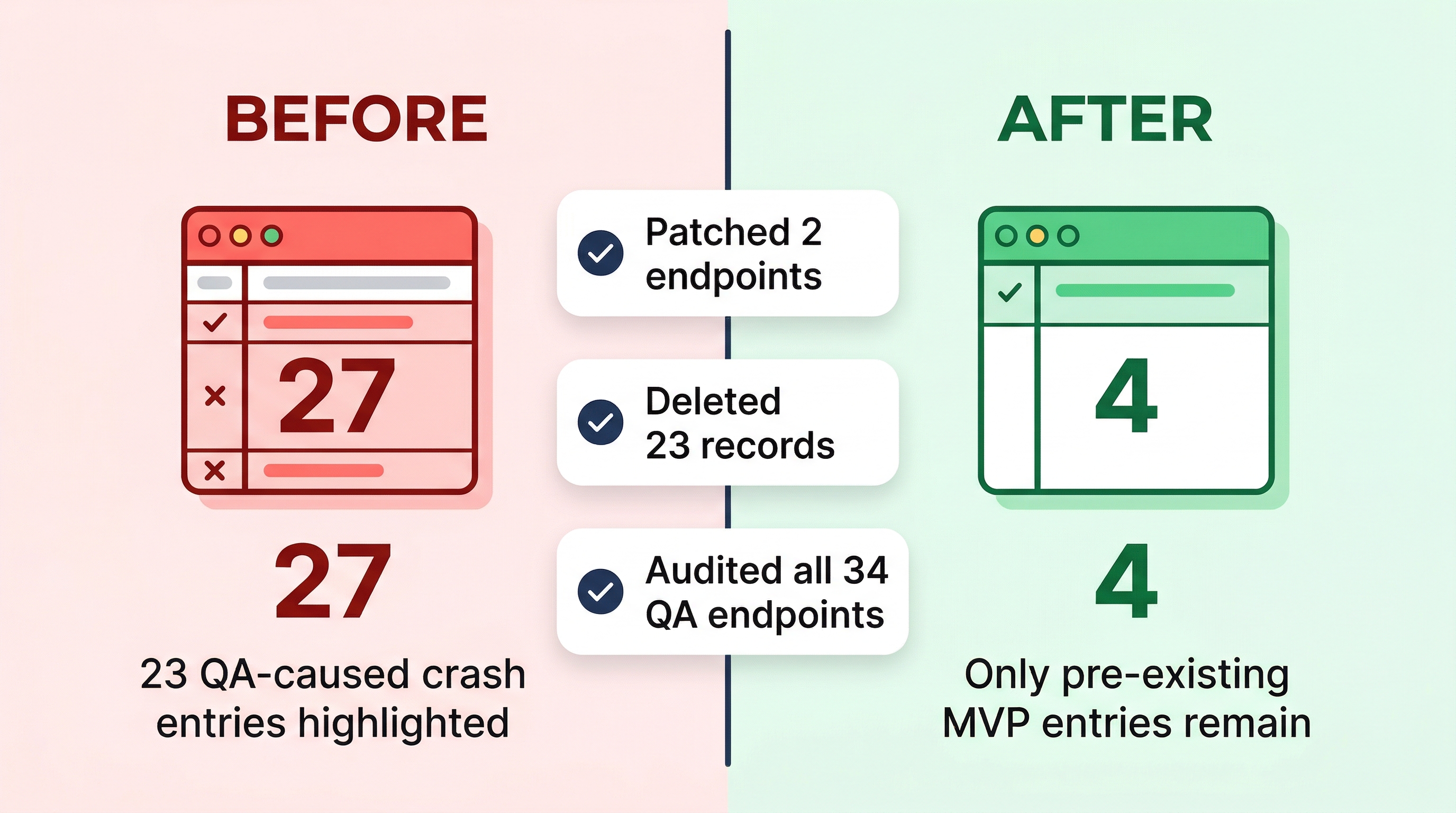

Cleanup & Proof

Crash log entries deleted (23)

IDs: 3, 4, 6, 7, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22, 23, 24, 25, 26, 27, 28, 29

All had function_name containing qa/resolve-edges-work or mvp/work/choose-best-current-role (cascaded from QA).

Crash log after cleanup (4 remaining)

| ID | Function | Error | Source |

|---|---|---|---|

| 5 | mvp/fundable/resolve-investors-edges | Text filter type error | Pre-existing MVP |

| 8 | mvp/resolve/resolve-edges-education | Unable to decode | Pre-existing MVP |

| 9 | mvp/expertise/llm-identify-person-expertise | JSON syntax error | Pre-existing MVP |

| 10 | mvp/fundable/resolve-investors-edges | Text filter type error | Pre-existing MVP |

Endpoints patched (2)

// BEFORE (bug):

|push:"X-Data-Source: live"

// AFTER (fix):

|push:"X-Data-Source: staging"

| Endpoint | ID | Patched at | Status |

|---|---|---|---|

| qa/run-full-pipeline | 8279 | 3:40 PM | Fixed |

| qa/run-full-batch | 8280 | 3:45 PM | Fixed |

Full endpoint audit (34 endpoints)

| Status | Count | Endpoints |

|---|---|---|

| Had hardcoded live (FIXED) | 2 | 8279 (run-full-pipeline), 8280 (run-full-batch) |

| Hardcoded staging (safe) | 3 | 8296, 8273, 8275 |

| Parameterized, defaults staging | 2 | 8282 (self-fix-pipeline), 8281 (self-fix-stage) |

| Staging precondition guard | 1 | 8271 (safe-enrich-person) |

| No api.request / read-only | 26 | All remaining endpoints |

Live log_qa_enrichment — not polluted

Queried log_qa_enrichment on live for function_name LIKE 'qa/%': returned 4 records, all from the earlier self-fix system (function: qa/process-enrich-layer-safe, status: diagnosed). Zero entries from qa/run-full-pipeline.

The outer endpoint's logging ran on staging correctly because it received X-Data-Source: staging from our Python scripts.

Root Cause

The qa/run-full-pipeline endpoint was created on March 30 with the internal api.request headers hardcoded to X-Data-Source: live. This was a copy-paste error during endpoint creation — the header should have been staging to match the outer request context.

The same error was present in qa/run-full-batch, which was created the same day.

The bug was not caught earlier because:

- The outer endpoint's own queries (skip-check, pass logging) ran on staging correctly, masking the issue

- Individual stage tests via

qa/test-stageworked correctly (no hardcoded headers) - The Python sweep scripts correctly sent staging headers, providing a false sense of security

Prevention Measures

| Measure | Status |

|---|---|

| Patch all endpoints with hardcoded live headers | Done (2 endpoints) |

| Full audit of all 34 QA endpoints | Done — no others found |

| Add staging precondition guards to critical write endpoints | Recommended |

| Parameterize data_source with staging default (like self-fix-stage) | Recommended |

| Add automated test to verify QA endpoints don't hit live | Recommended |

Conclusion

No data was corrupted, deleted, or lost on live.

The enrichment operations that ran on live are the same idempotent operations that normally run during the live enrichment pipeline. The 4 people who crashed had their writes prevented by the crash itself. All 23 spurious crash log entries have been cleaned up. Both buggy endpoints are patched. All 34 QA endpoints have been audited and confirmed safe.